When I covered libraries, frameworks and toolkits, I missed out on a potentially game-chaning toolkit, which I will dive into today.

In this blog, I take a first-hand look on the Unity Machine Learning-Agents Toolkit.

The „Kart Racing Game with Machine Learning in Unity! (Tutorial)“ YouTube video by Unity provides links to the download of the toolkit alongside a kart racing game example project. The example prject is based on Unity version 2018.4.

What is the Unity ML-Agents Toolkit?

The Unity Machine Learning Agents Toolkit (ML-Agents) is an open-source Unity plugin that enables games and simulations to serve as environments for training an AI. The AI can be trained using a variety of learning methods, including reinforcement learning, imitation learning, neuroevolution, or other machine learning methods through a simple-to-use Python API. The toolkit also provides implementations (based on TensorFlow) of state-of-the-art algorithms to enable game developers and hobbyists to easily train AI for 2D, 3D and VR/AR games. After training, the AI may be used for multiple purposes, including controlling NPC behavior (in a variety of settings such as multi-agent and adversarial), automated testing of game builds and evaluating different game design decisions pre-release. The ML-Agents toolkit is mutually beneficial for both game developers and AI researchers as it provides a central platform where advances in AI can be evaluated on Unity’s rich environments and then made accessible to the wider research and game developer communities. (cf. [https://github.com/Unity-Technologies])

Kart Racing Game: Introduction

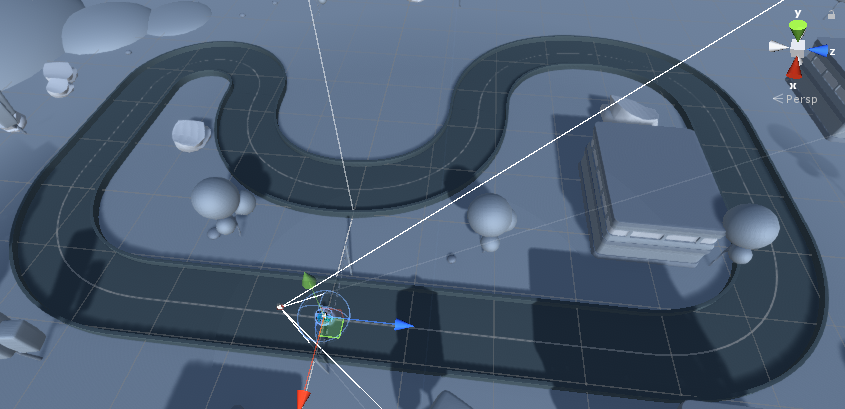

The Kart Racing game that is provided alongside the toolkit is based on a simple concept. Like most racing games, players are required to navigate through a given course for a given amount of laps. In this case, the RL agent is rewarded slightly positive for moving in the right direction, greatly positive for reaching a checkpoint and greatly negative for crushing into a wall. The course of the game is statically set, as shown in Figure 1.

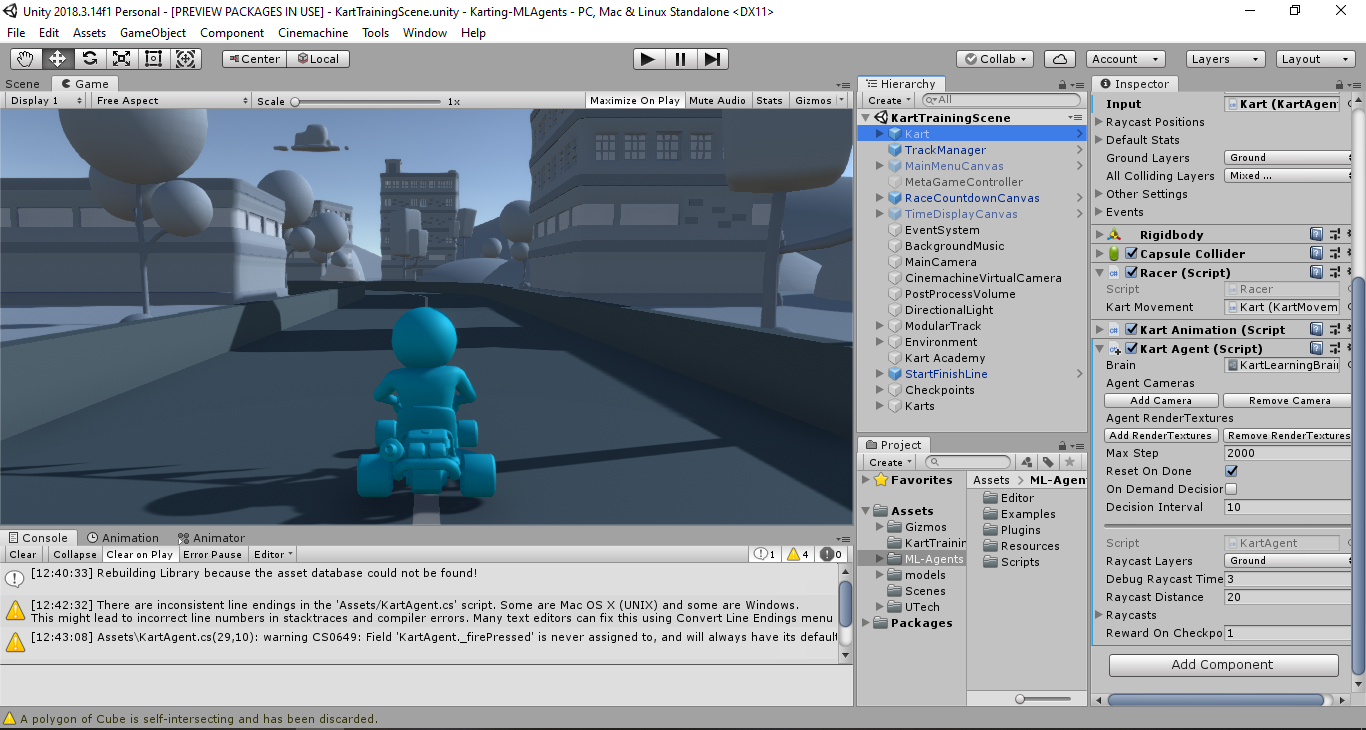

The scene of this example project is structured into the following elements, as shown in Figure 2.

- The ‚Kart‘ prefab holds the Kart Agent script, which represents the learning AI. In this case, the agent uses nine raycasts to decide for an action. The raycasts are attached to the Kart as child objects.

- The „TrackManager“ prefab checks if a kart reached a checkpoint.

- The „MainMenuCanvas“ prefab holds a UI template that may be enabled during play.

- The „MetaGameController“ object controls UI related elements such as the MainMenuCanvas and the RaceCountdownCanvas.

- The „RaceCountdownCanvas“ prefab triggers and controls a playable director, which is focused on a RaceStart timeline asset. It manages the start of the race.

- The „TimeDisplayCanvas“ prefab displays the current amount of time spent in the current lap.

- The „EventSystem“ object checks for UI related inputs.

- The „BackgroundMusic“ object has a Audio Source component attached that plays a given Audio Clip.

- The „MainCamera“ object, besides being the main point of view, has a second, virtual camera attached to it as a component as well as a post processing layer that improves the visuals of the game.

- The „CinemachineVirtualCamera“ objects helps the main camera keeping track the most important game objects. (cf. [https://docs.unity3d.com])

- The „PostProcessVolume“ object adds graphical effects to the game.

- The „DirectionalLight“ object is the main light source of the scene.

- The „ModularTrack“ object contains a number of ModularTrack prefabs which form the course of the racing game.

- The „Environment“ object contains all objects that are used to create an atmospheric ambience around the racing course, including building, trees, hills and clouds.

- The „KartAcademy“ object serves as a means to collectively train several RL agents simultaneously.

- The „StartFinishLine“ prefab is the actual goal asset in the game.

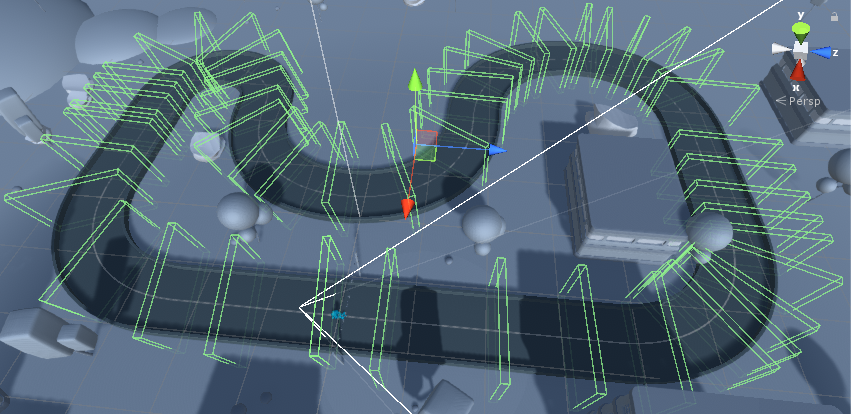

- The „Checkpoints“ object contains a number of checkpoint prefabs that are used as invisible triggers in the game, indicating successful maneuvering by an agent. The checkpoints are not spaced evenly, as shown in Figure 3.

- The „Karts“ objects contins a number of kart prefabs that will be individually controlled by different agent during play.

Kart Racing Game: Tests

The first time switching into playmode lead to the swift decision of making some minor adjustments. On the one hand, the camera angle does not display all agents at once, but individually, as shown in the video below. BEWARE, because on the other hand, the sound of 20 blasting engines to the rhythm of the backgroundmusic metaphorically asked to mute this application.

Thus, with the view switched and system muted, the next run started rather nicely, as shown in the video below (for your own sake, please mute the video). The red and green lines are raycasts used by the agents for decision-making. As we can see, a few agents get stuck on the track while others adapt rather quickly.

After 45 minutes, the agents showed improved perfomances, as shown in the video below. All agents take higher risks while taking a curve, reducing time to their best potential. Some agents mis-time their inputs, others are still adapting them, but all-in-all the results are good. This training cycle could be extend even further, but my main points are already covered, so the training ends here.

As we can see, this toolkit fully fulfills its purpose. If the kart-agents were trained for several hours, they could already be implemented into the game as enemies. If the course was adjusted, the agents might show how the new course could be mastered. If there was a bug in the game, the agents would exploit it.

Morale

My plans to develop a tool that applies an RL agent to any given Unity game have been outmatched by this existing toolkit. As prepared as I was to go through its development, now, all motivation to do so is lost – the tool has become unnecessary. In fact, it was from the beginning. On the other hand, testing projects with this toolkit might be a lot easier than developing my own means to do so, thus I shall accept this fate.

The upcoming blogs will be about applying this toolkit to two different example projects; as it was originally planned months ago.

Sources

[https://github.com/Unity-Technologies]

Unity ML-Agents Toolkit (Beta): https://github.com/Unity-Technologies/ml-agents (24/02/2020)

[https://docs.unity3d.com]

Class CinemachineVirtualCamera: https://docs.unity3d.com/Packages/com.unity.cinemachine@2.1/api/Cinemachine.CinemachineVirtualCamera.html (24/02/2020)